Test Page

Description

The EDGAR Server Log data series was originally based on our forthcoming Journal of Behavioral Finance paper available here. Please read the section "The EDGAR Server Log" for additional details. We have updated and extended the data series in the files provided here.

Web servers maintain a log of all page requests. For each request, a typical server will log the client IP address, timestamp of the request, and page requested, in addition to a few other qualifying items. Most servers automatically create a log of all activity, usually recorded in a file using the Common Log Format (or some extension of that) endorsed by the World Wide Web Consortium (W3C).

After our FOIA request for the data in 2013, the SEC started making the EDGAR log file data available online at:

https://www.sec.gov/data/edgar-log-file-data-set

The data is typically delayed by roughly one year, thus our current data series is 2003-2015. The SEC website provides some documentation but does not include information on problems in the data which they discussed with us in the initial release. Generally you can tell by looking at the file sizes when there are significant problems (see below).

We provide a substantially compressed version of the data, but if you choose to download it directly from the SEC site, you will gerenate a zip folder for each day (logYYYYMMDD.zip) which contains two files: a "csv" file with the data, and a "README" file documenting the variables. The data taken directly from the SEC website takes 1.73 terabytes (uncompressed) and consists of 4,839 daily files (for 2003-2015).

Data Issues

As noted before, there are periods when the data are incomplete. Althought the files begin in January of 2003, you can see that the data stream is not consistent until March of 2003. Thus, for our research we usually begin in March, 2003. In addition, the files between September 23, 2005 and May 10, 2006 were labeled by the SEC as "lost or damaged", which is observable in the volatility of the file sizes during this period. We recommend exluding this period in your analysis.

Data

The raw Server Log data takes almost 2 terabytes of storage and includes a large number of entries that are not useful for most analyses. By filtering out irrelevant entries and strategically addressing robot downloads we can shrink the files from more than 15 billion records to about 220 million records (about 1.5% of the original size), which we then compress into a zip file that takes about 2.2 gigabytes of storage. Each day is stored in a folder for its corresponding year and quarter. Each observation represents the total download counts for a given day for each specific SEC filing that has at least one non-robot download.

We use the following filters to compress the data:

- All observations flagged as a web crawler, index page request, or with server code >= 300 are excluded from all tabulations. Also those with missing cik or accession number or ip or date. Note a request not labeled as a web crawler can still be a robot. The user agent record sent as part of the client/server handshake allows web crawlers to self-identify. Although large search firms such as Google have an incentive to self-identify, programs written to download SEC data have no reason to make this declaration, and most robots likely do not.

- Remaining robot downloads are defined as those with >= 50 downloads from a single IP within a single day. These downloads are not part of the counts appearing in the daily compressed files. Note that this filter requires the data to be traversed twice for each daily file.

- Robot counts are tabulated only for the calendar summary file, not for the daily files. Daily files only include accession numbers (i.e., unique filings) with one or more non-robot download.

Again, to clarify, for the daily files we provide, there is one observation for every accession number (these are numbers assigned to each unique filing by the SEC) that has a non-zero count for non-robot downloads. For these cases the following items are tablulated:

- date -- the day the form was downloaded from the SEC site (YYYYMMDD).

- cik -- SEC-assigned central index key.

- accession -- the accession number assigned by the SEC. Each filing has a unique accession number.

- nr_total -- the non-robot total count of forms downloaded.

The type of form can be identified by the file type. We tabulated separately the major file types. nr_total will always equal the sum total of these subsets.

- htm -- web form associated with most filings. One can argue that if you are attempting to measure downloads read by individuals, this format is probably most relevant.

- txt -- the complete form, including all documents and html markup, is in the .txt file. Typically these are used for computational consumption of the documents.

- xbrl -- the eXtensible Business Reporting Language files, typically provides tables of finanacial data.

- other -- any form whose file type is not htm, txt or xbrl.

By merging the Sever Log data with the master.idx files we also are able to provide the form type and filing date. The merge is successful in the vast majority of cases, however when we were not able to match the log data the form is identified as "missing" and the value for the filing_date is set equal to -99.

- form -- form type (e.g., 10-K, 10-Q, 8-K, 4, etc. The SEC provides a list of current form types here, but note that there are many forms, such as 10-K405, that no longer exist but appear in the prior years).

- filing_date -- the date on which the form was filed (YYYYMMDD).

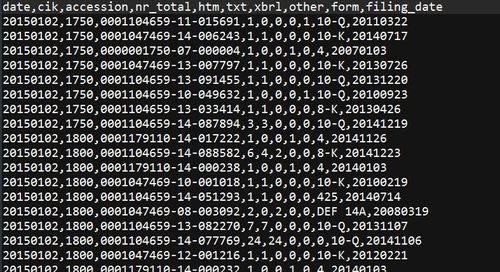

A sample of the first few lines of the data:

Download the compressed data (2.12 gig)

As an artifact of the compressing the data we also create a daily time series of totals by download type. The variables are: date, nr_total, htm, txt, xbrl, other, robot, trading_day. The variables are defined as before with the addition of trading_day which is a dummy variable indicating if the day corresponds to a CRSP calendar date.

Download the Calendar Summary data (215 KB).